If your team is still running two-week sprints, writing detailed stories, and using AI mostly for autocomplete, you’re getting a fraction of what’s available. AI change management is a leadership problem and it requires a leadership level solution.

What follows draws from Mainsail AI Labs’ direct work helping portfolio companies build AI capabilities thoughtfully and deliberately. These aren’t predictions. They’re patterns we see working right now — practices that are transforming how product and technology organizations operate, and that we believe are increasingly necessary to capture the full value of AI for your team and your customers.

Our recent Mainsail AI Launch Labs event reinforced this directly: when teams restructured how they worked — not just which tools they used — the acceleration was significant. The experience showed us just how much is possible when process changes and AI tools move together.

Start with Pain Relief, Not Productivity Theater

The fastest path to real AI adoption in your engineering organization isn’t a mandate — it’s a win. Find the most tedious, low-creativity tasks your engineers do repeatedly and automate those first.

The tasks that tend to unlock buy-in quickly include writing quality assurance plans, drafting responses to pull request comments, and generating boilerplate code for repetitive patterns. These aren’t glamorous. That’s the point. When an engineer’s first experience removes something they genuinely hated doing, they want more.

One thing to watch for: adoption will not be uniform. You will have engineers who are deeply engaged and running complex agent-based workflows, and engineers who haven’t gotten past a basic tool yet. Both groups need a path forward. Don’t design your rollout only for the early adopters.

The Real Bottleneck is Your Operating Model, Not Your Tools

Here is what is consistent across teams making genuine progress: they are changing how they work, not just what tools they use.

Two-week sprints built around detailed story-writing are becoming a constraint. The handoff — Product Manager (PM) writes a full story with acceptance criteria, engineer implements it, QA reviews it — was designed for a world where generating and reviewing code took time. That friction is gone. The process is now the bottleneck.

The teams ahead of this are moving to one-week cadences with short briefs instead of detailed stories. Product managers write the intent and the outcome, engineers flesh out the approach, and the team spends the week orchestrating agents to complete the project. No one spends hours manually writing acceptance criteria that an agent can generate in seconds.

AI change management is not a process question — it’s a leadership question. If your org structure and operating model look the same by the end of this summer, the tools won’t matter. The shift in how your team operates is the actual proof point that adoption has taken hold.

What Your Product Managers Should Be Doing Differently

We believe one of the most underutilized opportunities right now is getting your product leaders closer to the code — not to write it, but to query it.

Every PM on your team should have the codebase available locally and be able to ask questions of it through an agent. Not “show me the function that handles user authentication” but “what would break if we changed how billing works?” or “where does the product currently bottleneck for enterprise customers?”

The practical result: PMs stop asking engineers questions that an agent can answer in thirty seconds. Engineers get fewer interruptions. The “no, that won’t work” conversation happens earlier, with better information, and takes less time for everyone.

Guardrails Matter More than Tool Selection

There is a temptation to spend a lot of energy picking the best AI tool. The companies making the most progress have largely stopped worrying about this because the tools leapfrog each other constantly. Whatever is best today may not be best in four months.

What does not change as fast: the standards, guardrails, and shared practices that determine how your team uses any tool. The teams we see ahead of this are investing in:

- Centralized skills libraries — shared prompts, standards, and agent behaviors installed across all project repositories, so every engineer is working from the same baseline.

- Pre-commit checks — automated linting and standards enforcement that runs before every AI-assisted commit, catching mistakes before they become code review problems.

- Mistake logging — a lightweight system where agent corrections are auto-logged, new sessions read the log to avoid repeating errors, and repeated mistakes get promoted to hard rules.

- Knowledge living next to the code — context your agents need to do good work should be in the codebase, not buried in an external wiki no one reads.

On tool selection, set a default stack your team can pick up and go. Identify one or two people per team who naturally evaluate new tools and let them vet what gets added. Don’t make this a committee decision every time something new ships.

Don’t Let One Provider Become a Single Point of Failure

If your team is committing to one-week delivery using agentic workflows and a provider goes down, you have a problem. This is not hypothetical — it happens often. Address this risk now by keeping an eye on provider reliability, and make sure your team knows what to do if a primary tool is unavailable. Having two tools in your stack means you can route around an outage, even if it’s just for a few hours.

What AI Change Management Looks Like in Practice: Six Actions

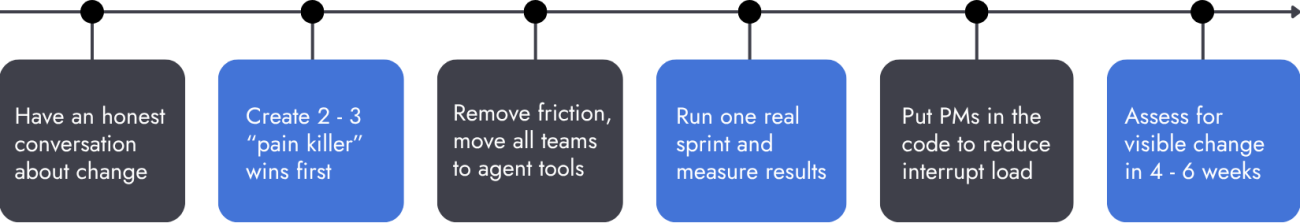

- Hold an all-hands with engineering and product together. Not a rollout announcement, an honest conversation about what is changing and why. People need to understand the direction before they can move in it.

- Build two or three “pain killer” automations in the first month. Target the tasks engineers complain about most. Early wins create pull. People come looking for more rather than needing to be pushed.

- Move everyone off autocomplete-only tools. Autocomplete-only tools are a starting point, not a destination. Your engineers should be working with CLI-based agent tools. Remove the friction and cover the cost. We support this investment at the board level.

- Pilot a one-week sprint cadence with brief-based planning on one team. Don’t redesign your entire process at once. Run the experiment somewhere real, measure what changes, and let the results make the case.

- Get your product managers onto agent tools with codebase access. In our experience, this change reduces engineer interrupt load and accelerates product decision-making more than most teams expect.

- Set a review checkpoint in four to six weeks. Honestly assess whether your operating model is visibly changing. That is the signal that matters. It’s not a checkbox.

The indicator that this is actually working is not how many AI tools your team has licensed. It’s whether your org structure and operating model are visibly different. If you’re still running two-week sprints with a traditional PM-to-engineer handoff in a few months from now, the adoption hasn’t taken hold — and you’re paying for tools that aren’t changing outcomes.